On a more positive front, while waiting to complete the electronics work, I decided to start building some N-scale structure kits that I have lying around. I bought this Interstate Oil Walthers kit at a trains how years ago. I got it real cheap for $10  It came out pretty well and I think it will go well in the town scene.

It came out pretty well and I think it will go well in the town scene.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Town and country - automated N-scale layout

- Thread starter kleiner

- Start date

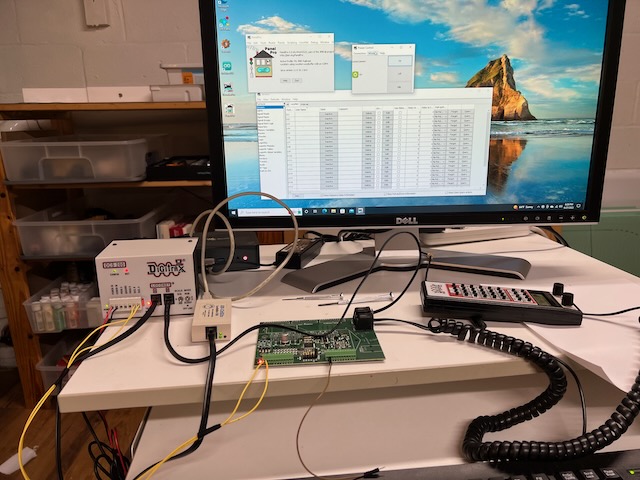

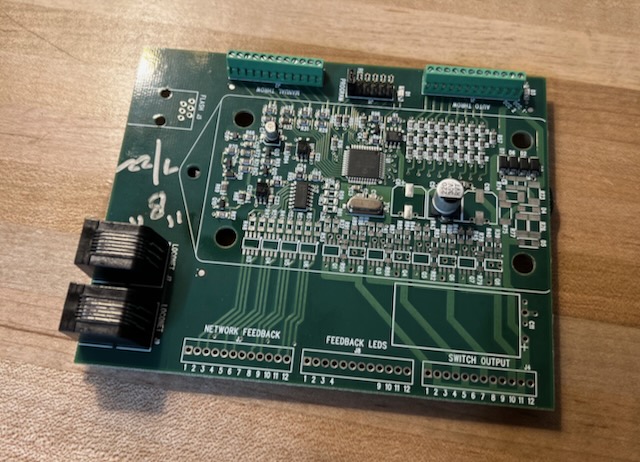

Finally! After a struggle, I was able to get the sensor boards from DCC Specialities programmed properly. I had to use both JMRI and a throttle to program the boards. It really helps to have a proper programming setup for complex tasks like this. Next step is to get these boards installed on the layout and test the sensors. Keeping my fingers crossed

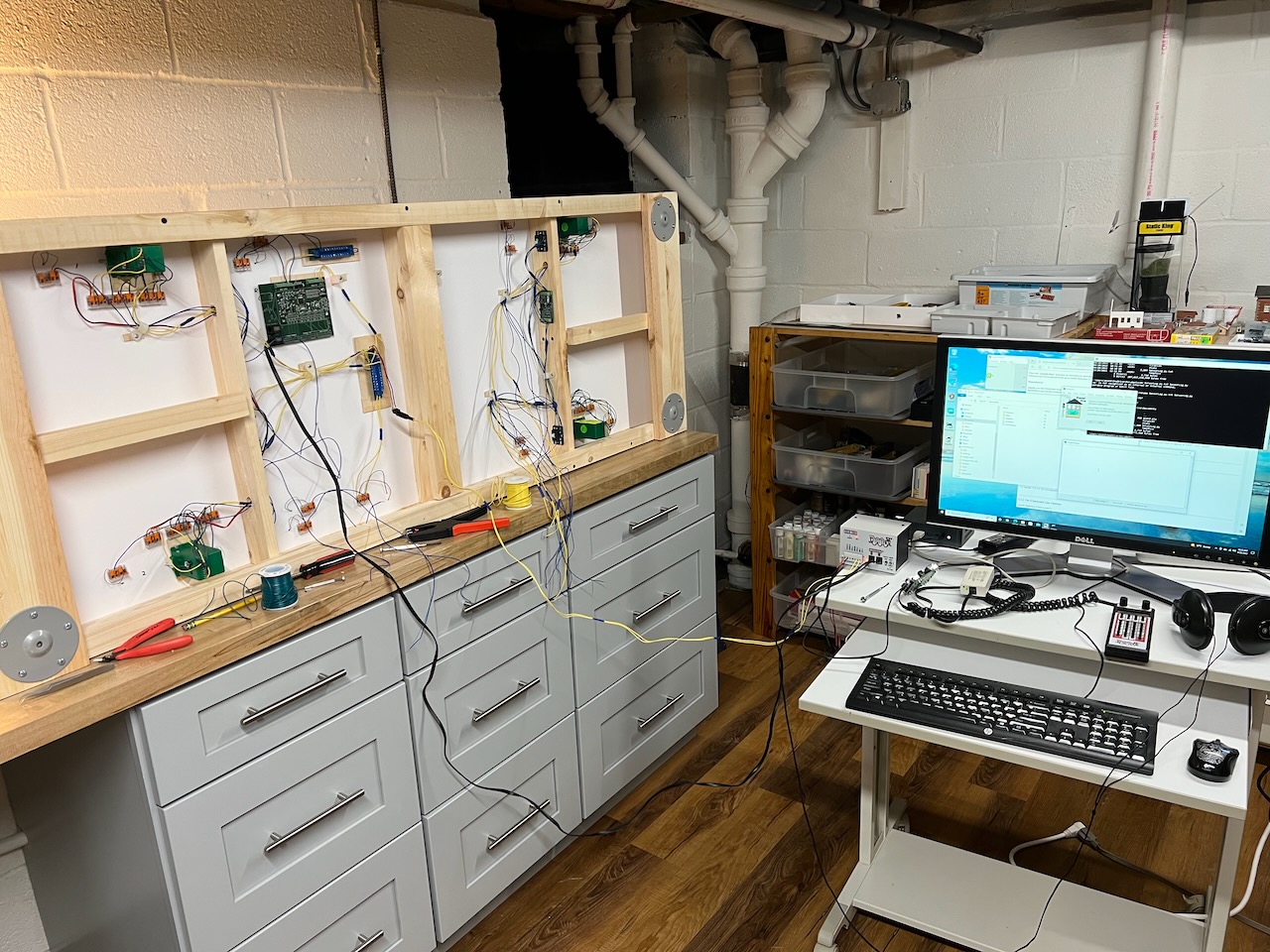

I think that a Boeing 787 probably has a simpler wiring harness

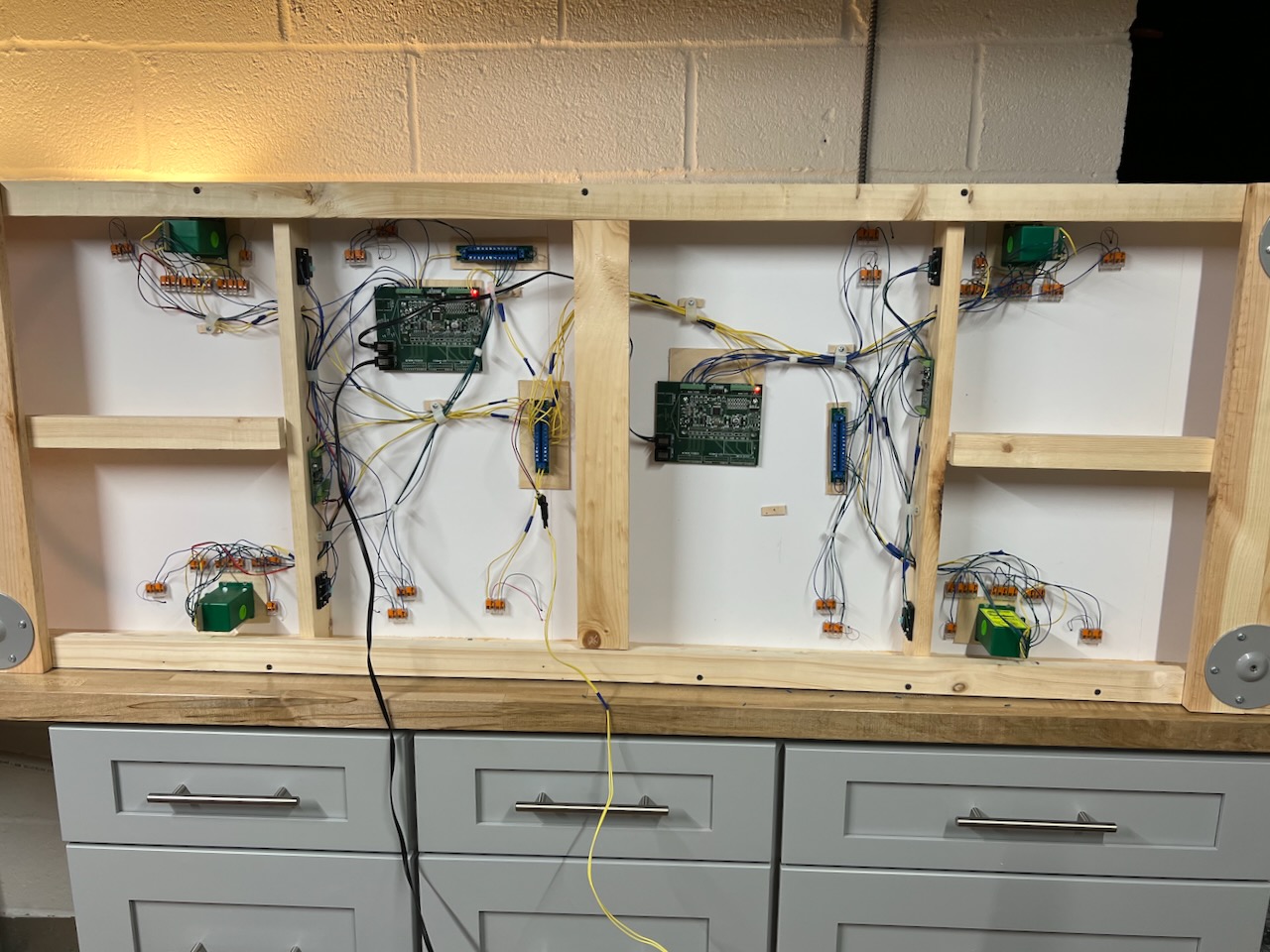

I have made good progress installing the new boards. The boards work very well and I have been testing out the sensors as I wire them up. One good side effect of switching to the DCC Specialities boards is that the wiring will actually become much simpler once I am done. It just looks messy for now.

I have made good progress installing the new boards. The boards work very well and I have been testing out the sensors as I wire them up. One good side effect of switching to the DCC Specialities boards is that the wiring will actually become much simpler once I am done. It just looks messy for now.

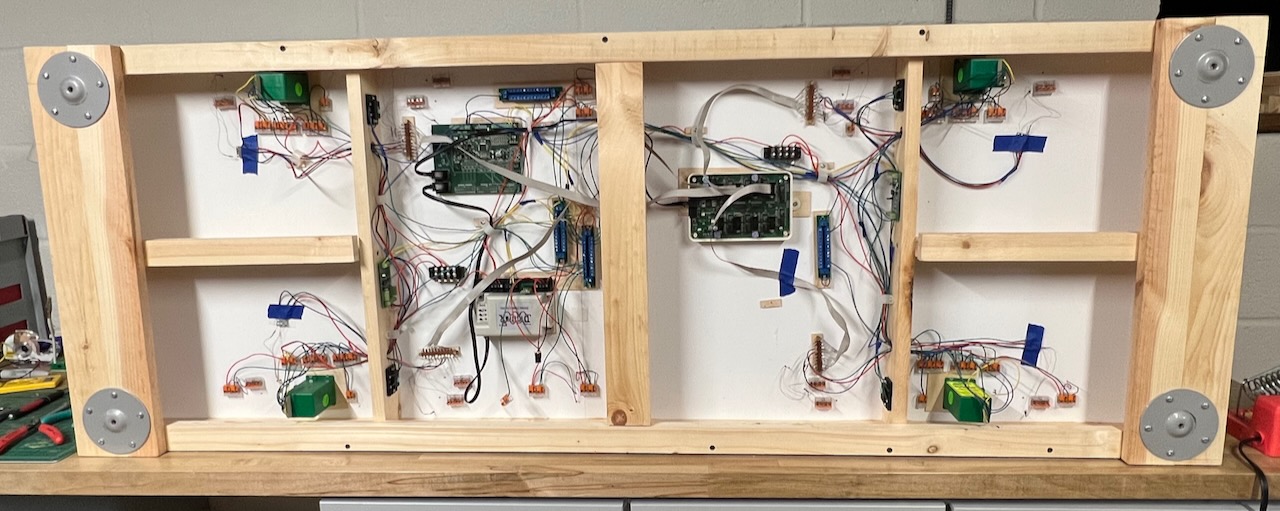

Finally done installing the DCC Specialities Jack 64 Discrete sensor boards. I tested all the sensors and they all work perfectly. Also I have drastically reduced the number of wires going to the the layout. Now, it's just DCC track power and Loconet. Looks so much cleaner. I wish I had just gone with these boards right from the beginning as I would have saved so much time. These boards were very reasonably priced - $40 each.

Next step is to get JMRI set up properly and to get started on programming the control script for the trains. The good thing is that I already did this once many years ago so I have fairly good idea of how to proceed.

Next step is to get JMRI set up properly and to get started on programming the control script for the trains. The good thing is that I already did this once many years ago so I have fairly good idea of how to proceed.

gjohnston

Slow Learner

I just read your post concerning the DCC Specialties Jack AD Sensor board. I just ordered one to report block occupancy on my reverse loops from the two PSX-ARs. You said your sensor boards came with no manual or documentation. How did you figure out how to program them? I am hoping mine comes with documentation. But if it doesn't I won't know what to do. Did you email the people at Tony's Trains for further information?

Thanks.

Greg

Thanks.

Greg

I did email them and they sent me a Word document with the manual. I am enclosing the PDF of the manual as an attachment. The confusing thing is that the Loconet address is not the same as the DCC address. Once you get your addresses figured out, it works very well.I just read your post concerning the DCC Specialties Jack AD Sensor board. I just ordered one to report block occupancy on my reverse loops from the two PSX-ARs. You said your sensor boards came with no manual or documentation. How did you figure out how to program them? I am hoping mine comes with documentation. But if it doesn't I won't know what to do. Did you email the people at Tony's Trains for further information?

Thanks.

Greg

Attachments

gjohnston

Slow Learner

Thank you so much. This will be very helpful.I did email them and they sent me a Word document with the manual. I am enclosing the PDF of the manual as an attachment. The confusing thing is that the Loconet address is not the same as the DCC address. Once you get your addresses figured out, it works very well.

Ok - finally a lot of updates!

First big change is in the way I detect trains - recall that this is supposed to be a fully computer controlled layout. After trying out the reed switches, I got rid of them as they were not as reliable as I would have liked. Instead I am now using a Digitrax BXP88 feedback encoder which depends on detecting track current draw. I still have one Jack 64 feedback encoder however as I want to detect the position of the turnouts.

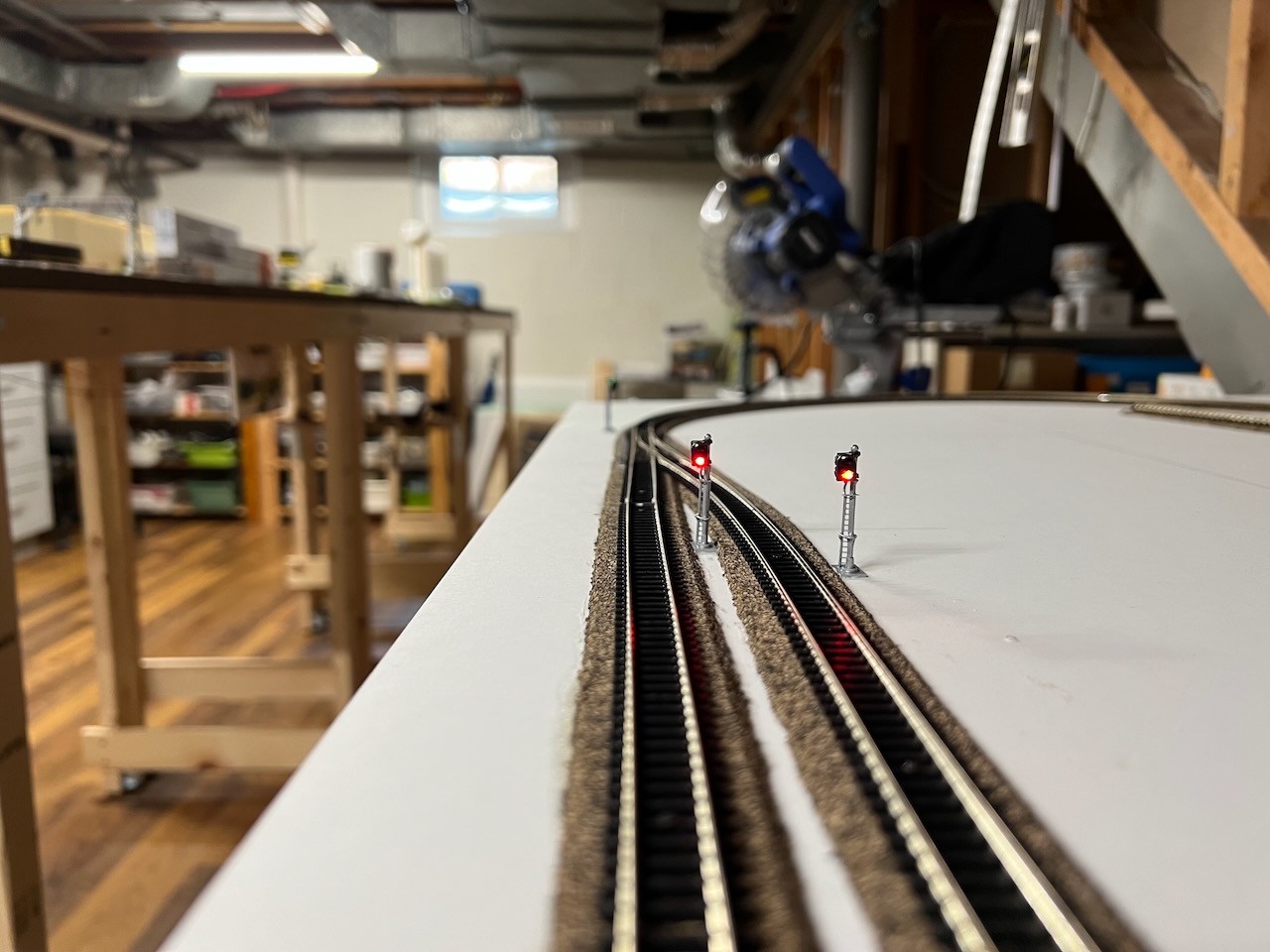

Second big thing I have done is that I have installed signals. This is something I have always wanted on a layout and I have finally managed to get it done. It was a huge undertaking as the signals are so delicate and hard to install. I have just gone with simple two-aspect signals as they were the easiest to install. The signals are driven by a TC-64 board that I bought many years ago from RR-cirkits. This is a Loconet device that can directly drive the signals and is easily controlled from JMRI

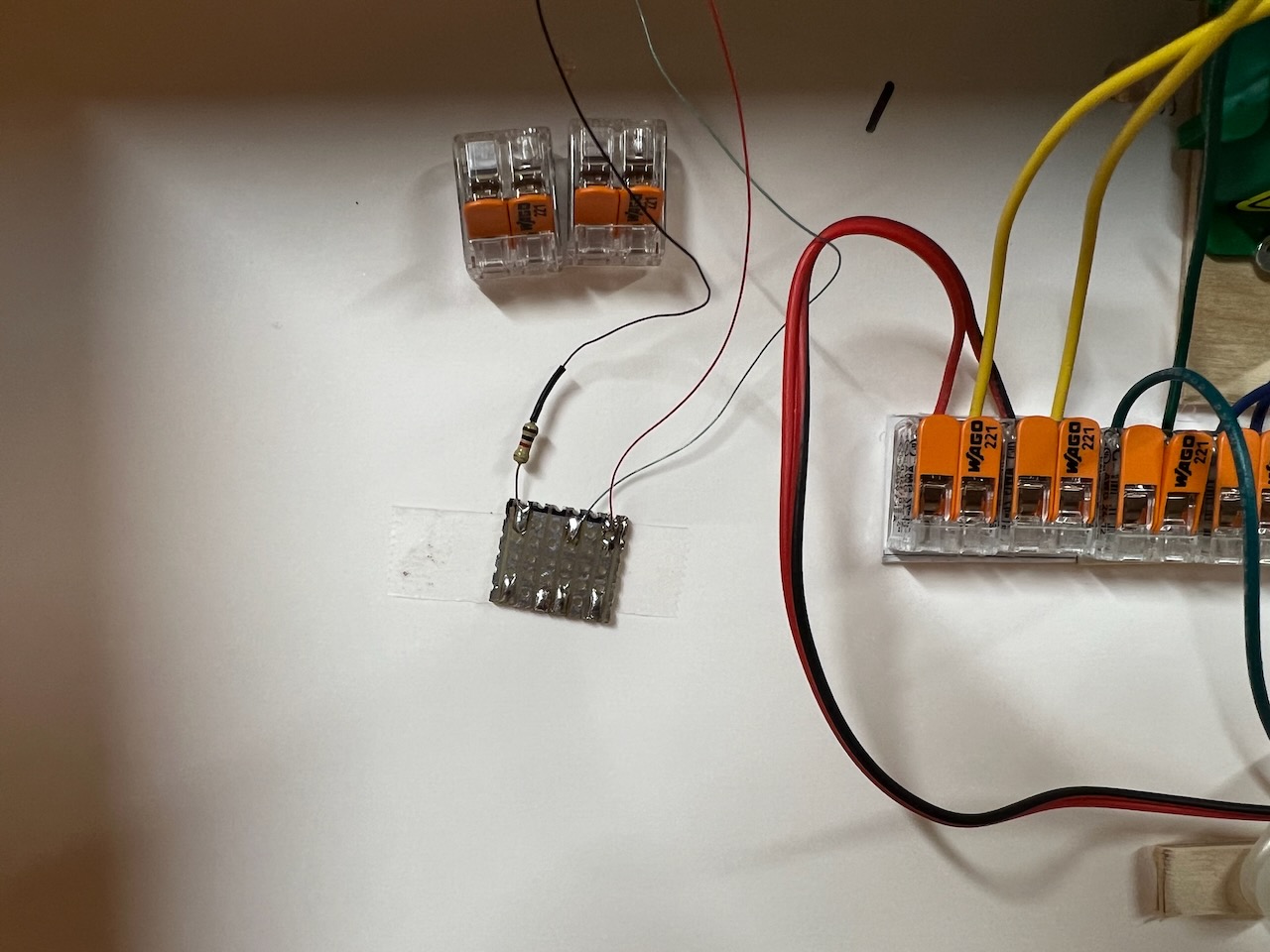

A view of the underside of the layout right now in the next pic. There is a lot of wiring even for such a small layout.

One of the N-scale signals - they are definitely not easy to install.

Under the layout, I have to use small pieces of vector board to solder on to the leeds

(Note the Wago connectors - I love them!)

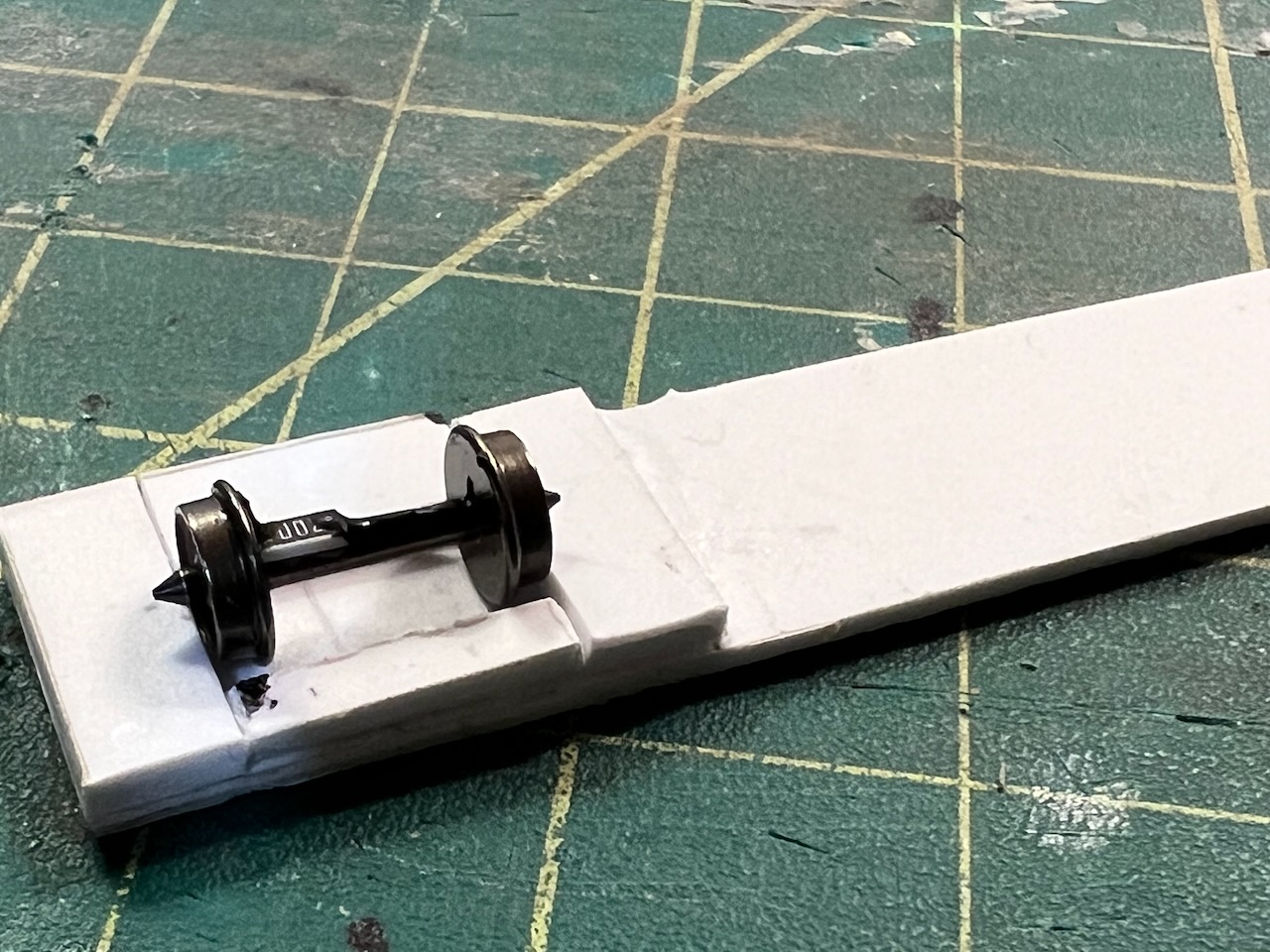

Finally, I figured out a way to install resistors on wheel sets - these are 10K surface mount resistors installed on 33" Intermountain wheel sets. I built a little jig to hold the wheels securely when installing the resistors.

First big change is in the way I detect trains - recall that this is supposed to be a fully computer controlled layout. After trying out the reed switches, I got rid of them as they were not as reliable as I would have liked. Instead I am now using a Digitrax BXP88 feedback encoder which depends on detecting track current draw. I still have one Jack 64 feedback encoder however as I want to detect the position of the turnouts.

Second big thing I have done is that I have installed signals. This is something I have always wanted on a layout and I have finally managed to get it done. It was a huge undertaking as the signals are so delicate and hard to install. I have just gone with simple two-aspect signals as they were the easiest to install. The signals are driven by a TC-64 board that I bought many years ago from RR-cirkits. This is a Loconet device that can directly drive the signals and is easily controlled from JMRI

A view of the underside of the layout right now in the next pic. There is a lot of wiring even for such a small layout.

One of the N-scale signals - they are definitely not easy to install.

Under the layout, I have to use small pieces of vector board to solder on to the leeds

(Note the Wago connectors - I love them!)

Finally, I figured out a way to install resistors on wheel sets - these are 10K surface mount resistors installed on 33" Intermountain wheel sets. I built a little jig to hold the wheels securely when installing the resistors.

I just love the appearance of the signals and the layout looks really great when they are all properly lit up. Makes the effort to install them worthwhile. I can't wait to get started with the scenery but that will have to wait until the electrical stuff is fully sorted out.

I tested all of the signals before installing them but even so, the green light stopped working on one of them so I will have to install a new signal.

I tested all of the signals before installing them but even so, the green light stopped working on one of them so I will have to install a new signal.

Smudge617

Well-Known Member

Some LED's can be replaced rather than buying a new one.I just love the appearance of the signals and the layout looks really great when they are all properly lit up. Makes the effort to install them worthwhile. I can't wait to get started with the scenery but that will have to wait until the electrical stuff is fully sorted out.

I tested all of the signals before installing them but even so, the green light stopped working on one of them so I will have to install a new signal.

View attachment 166519

Some LED's can be replaced rather than buying a new one.

I did try to do it but the LED seems to be molded into the signal. It was just simpler to order a few more from Amazon and be done with it

Hutch

Well-Known Member

This is where some BLI locos come in. You can record macros with them and have them stopp8ngcand going and whistling while you run other trains. At least, that's what they tell me. I haven't tried it yet.Kleiner - Wow, that made my brain hurt when I looked under the skirts of Computer Vision Neural Network theory. I will go out on a limb here: You are looking at multiple camera's using 'Face Detection' to determine what train is what and where. Is one camera directly overhead with a 'whole layout view' which would look to be the camera to determine the location of a train or trains. If a frame is processed at 1/2 second intervals, location may not be precise although close enough for what is wanted. Wouldn't you also need other camera's just above the horizontal plane at some location where foreground objects are not blocking to determine more specific things about each train to help with identity? I.E. If you happen to have multiple specific vendor GP30's; which could be identical from the top view, you would need to ID each - according to the Engine number or some other outstanding feature using a side view while it is moving. Again, I guess that you could grab a specific frame every 1/2 or so to process. To me that would be a ton of processing. Looked quick at the Jetson Nano. Quad core ARM processor running a 4GFLOPs/sec with a bunch of RAM. Is that for each core, total or GPU? I suspect that it would reach it limits sooner than later. This is going to be interesting to follow!

I am also working toward some sort of smart control of trains that can circle the layout without my intervention while I am running a local or switching the yard somewhere. I have been using AtMel ( now Microchip ) devices as my goto devices. My layout is bigger ( 30 x 40 ) than what you are going with, so camera's would not be possible because of the sheer count. Current detection looks to be the best way to do this in my case.

I am also a believer of using DCC as train control only. The more stuff you hang on the bus, the more chances sh.t is gonna happen. On top of that if DCC dies for a specific segment or section, so does all the other stuff hung on that segment or section. Troubleshooting becomes a real pain.

Merry Christmas!

I can definitely see that a macro would be useful under some circumstances but I am aiming for this layout to be 100% computer controlled. Automation works best if a single computer has complete control over the whole layout.This is where some BLI locos come in. You can record macros with them and have them stopp8ngcand going and whistling while you run other trains. At least, that's what they tell me. I haven't tried it yet.

A few layout updates. I got the replacement and replaced the defective signal and it's working fine now. I am currently programming the rules for controlling the signals using JMRI Logix. Here is what the control logic looks like for one of the signals.

What this rule is saying is that given that the block detected by sensor LS19 is occupied and the turnout is set correctly for the next block, and the next block is unoccupied, then show a green signal, otherwise show a red signal.

Also, I removed the Wabbit discrete decoder from the layout for two reasons:

1. Now that I am using a the TC-64, I might as well use one of the unused ports for use for feedback. The TC-64 has eight ports, each of which can be configured as input or output. I am using four of the ports as outputs for controlling the signals. I now configured one of the ports as an input port to handle the Tortoise position feedback

2. The Rabbit decoder was not properly handling the interrogation from JMRI that happens at startup time. This was a big nuisance since I want the software to detect turnout positions automatically at power-up.

So far so good, the TC-64 is working perfectly.

1. Now that I am using a the TC-64, I might as well use one of the unused ports for use for feedback. The TC-64 has eight ports, each of which can be configured as input or output. I am using four of the ports as outputs for controlling the signals. I now configured one of the ports as an input port to handle the Tortoise position feedback

2. The Rabbit decoder was not properly handling the interrogation from JMRI that happens at startup time. This was a big nuisance since I want the software to detect turnout positions automatically at power-up.

So far so good, the TC-64 is working perfectly.